How Large Language Models Will Transform Science, Society, and AI

Shana Lynch

GPT-3 can translate language, write essays, generate computer code, and more — all with limited to no supervision.

For the latest Stanford research and news on large language models, subscribe to our newsletter.

In July 2020, OpenAI unveiled GPT-3, a language model that was easily the largest known at the time. Put simply, GPT-3 is trained to predict the next word in a sentence, much like how a text message autocomplete feature works. However, model developers and early users demonstrated that it had surprising capabilities, like the ability to write convincing essays, create charts and websites from text descriptions, generate computer code, and more — all with limited to no supervision. The model also has shortcomings. For example, it can generate racist, sexist, and bigoted text, as well as superficially plausible content that, upon further inspection, is factually inaccurate, undesirable, or unpredictable.

To better understand GPT-3’s capabilities, limitations, and potential impact on society, HAI convened researchers from OpenAI, Stanford, and other universities in a Chatham House Rule workshop. Below are some takeaways from the discussion. A more detailed summary can be found here.

As language models grow, their capabilities change in unexpected ways

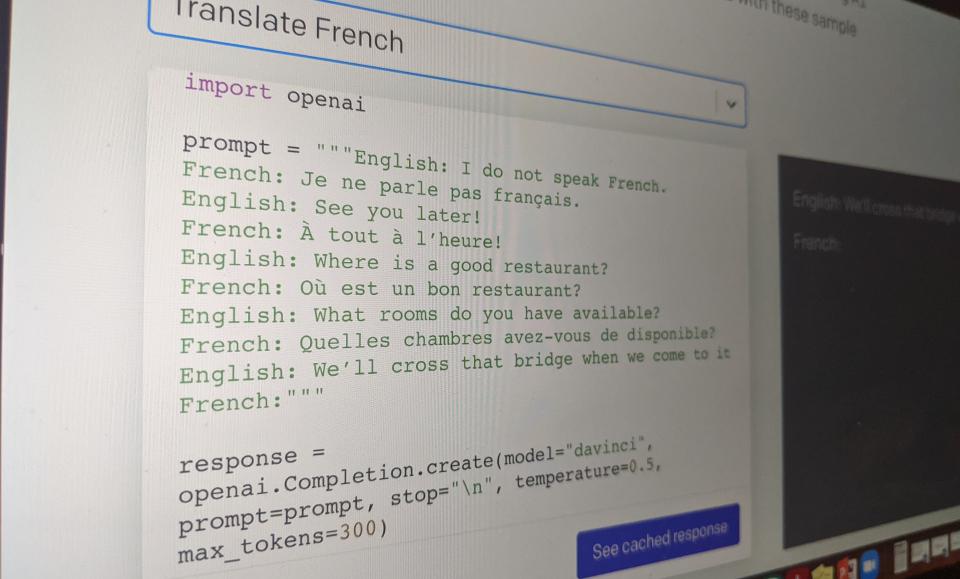

GPT-3 has 175 billion parameters and was trained on 570 gigabytes of text. For comparison, its predecessor, GPT-2, was over 100 times smaller, at 1.5 billion parameters. This increase in scale drastically changes the behavior of the model — GPT-3 is able to perform tasks it was not explicitly trained on, like translating sentences from English to French, with few to no training examples. This behavior was mostly absent in GPT-2. Furthermore, for some tasks, GPT-3 outperforms models that were explicitly trained to solve those tasks, although in other tasks it falls short. Workshop participants said they were surprised that such behavior emerges from simple scaling of data and computational resources and expressed curiosity about what further capabilities would emerge from further scale.

GPT-3’s uses and their downstream effects on the economy are unknown

GPT-3 has an unusually large set of capabilities, including text summarization, chatbots, search, and code generation. Future users are likely to discover even more capabilities. This makes it difficult to characterize all possible uses (and misuses) of large language models in order to forecast the impact GPT-3 might have on society. Furthermore, it’s unclear what effect highly capable models will have on the labor market. This raises the question of when (or what) jobs could (or should) be automated by large language models.

Is GPT-3 intelligent, and does it matter?

Unlike chess engines, which solve a specific problem, humans are “generally” intelligent and can learn to do anything from writing poetry to playing soccer to filing tax returns. In contrast to most current AI systems, GPT-3 is edging closer to such general intelligence, workshop participants agreed. However, participants differed in terms of where they felt GPT-3 fell short in this regard.

Some participants said that GPT-3 lacked intentions, goals, and the ability to understand cause and effect — all hallmarks of human cognition. On the other hand, some noted that GPT-3 might not need to understand to successfully perform tasks — after all, a non-French speaker recently won the French Scrabble championship.

Future models won’t be restricted to learning just from language

GPT-3 was trained primarily on text. Participants agreed that future language models would be trained on data from other modalities (e.g., images, audio recordings, videos, etc.) to enable more diverse capabilities, provide a stronger learning signal, and increase learning speed. In fact, shortly after the workshop, OpenAI took a step in this direction and released a model called DALL-E, a version of GPT-3 that generates images from text descriptions. One surprising aspect of DALL-E is its ability to sensibly synthesize visual images from whimsical text descriptions. For example, it can generate a convincing rendition of “a baby daikon radish in a tutu walking a dog.”

Furthermore, some workshop participants also felt future models should be embodied — meaning that they should be situated in an environment they can interact with. Some argued this would help models learn cause and effect the way humans do, through physically interacting with their surroundings.

Disinformation is a real concern, but several unknowns remain

Models like GPT-3 can be used to create false or misleading essays, tweets, or news stories. Still, participants questioned whether it’s easier, cheaper, and more effective to hire humans to create such propaganda. One held that we could learn from similar calls of alarm when the photo-editing software program Photoshop was developed. Most agreed that we need a better understanding of the economies of automated versus human-generated disinformation before we understand how much of a threat GPT-3 poses.

Future models won’t merely reflect the data — they will reflect our chosen values

GPT-3 can exhibit undesirable behavior, including known racial, gender, and religious biases. Participants noted that it’s difficult to define what it means to mitigate such behavior in a universal manner—either in the training data or in the trained model — since appropriate language use varies across context and cultures. Nevertheless, participants discussed several potential solutions, including filtering the training data or model outputs, changing the way the model is trained, and learning from human feedback and testing. However, participants agreed there is no silver bullet and further cross-disciplinary research is needed on what values we should imbue these models with and how to accomplish this.

We should develop norms and principles for deploying language models now

Who should build and deploy these large language models? How will they be held accountable for possible harms resulting from poor performance, bias, or misuse? Workshop participants considered a range of ideas: Increase resources available to universities so that academia can build and evaluate new models, legally require disclosure when AI is used to generate synthetic media, and develop tools and metrics to evaluate possible harms and misuses.

Pervading the workshop conversation was also a sense of urgency — organizations developing large language models will have only a short window of opportunity before others develop similar or better models. Those currently on the cutting edge, participants argued, have a unique ability and responsibility to set norms and guidelines that others may follow.

Want to learn more about the workshop’s main points? Read the whitepaper.

Stanford HAI's mission is to advance AI research, education, policy and practice to improve the human condition. Learn more.