Meet CoAuthor, an Experiment in Human-AI Collaborative Writing

This article is an existential crisis. It is written by a professional writer, writing about artificial intelligence that helps writers write. There's a lot of nagging doubt in my mind about this. Is that okay? I mean, shouldn't humans write their own content? And does this mean the writing is on the wall for an entire profession? Will there be no more writers? We all have to ask ourselves what our roles in this brave new world will be.

The italicized text above and below was written by a large language model. While professional writers might not fear for their careers just yet, at least by the example above, the model seems to do a good job grasping the topic at hand and sensing its co-writer’s (my) existential dread.

Meet “CoAuthor.” It’s an interface, a dataset, and an experiment all in one. CoAuthor comes from Mina Lee, a doctoral student in computer science at Stanford University, and her advisor Percy Liang, a Stanford associate professor of computer science and director of the Center for Research on Foundation Models, born out of the Stanford Institute for Human-Centered Artificial Intelligence, and her collaborator, Qian Yang, an assistant professor at Cornell University.

“We believe language models have a huge potential to help our writing process. People are already finding these models to be useful and incorporating them into their workflows. For example, there are several books and award-winning essays co-authored with such models,” Lee says.

Through her experiments, Lee believes that language models are most useful and powerful when augmenting human writing skills, rather than replacing them.

“We think of a language model as a ‘collaborator’ in the writing process that can enhance human productivity and creativity, helping to write more expressively and faster,” she says.

Intangibles

AI that helps people write is not new. Google’s predictive search is an easy example, as are the next-word text suggestion algorithms on a smartphone. Other apps help you compose an email or even write code. So why not create AI that helps humans write well?

Writing computer code or a text to your friend is a far cry from writing an arresting poem or a deft essay. Those pieces require creative writers who invent combinations of words that are original, interesting, and thought provoking. It's hard to imagine a machine writing, say, Cormac McCarthy. But perhaps all that's missing is the right artificial intelligence tool.

CoAuthor is based on GPT-3, one of the recent large language models from OpenAI, trained on a massive collection of already-written text on the internet. It would be a tall order to think a model based on existing text might be capable of creating something original, but Lee and her collaborators wanted to see how it can nudge writers to deviate from their routines—to go beyond their comfort zone (e.g., vocabularies that they use daily)—to write something that they would not have written otherwise. They also wanted to understand the impact such collaborations have on a writer’s personal sense of accomplishment and ownership.

“We want to see if AI can help humans achieve the intangible qualities of great writing,” Lee says.

Machines are good at doing search and retrieval and spotting connections. Humans are good at spotting creativity. If you think this article is written well, it is because of the human author, not in spite of it.

AI/Human Collaboration

The goal, Lee says, was not to build a system that can make humans write better and faster. Instead, it was to investigate the potential of recent large language models to aid in the writing process and see where they succeed and fail. They built CoAuthor as an interface that records writing sessions at a keystroke level, curating a large interaction dataset as writers worked with GPT-3 and analyzing how human writers and AI collaborate.

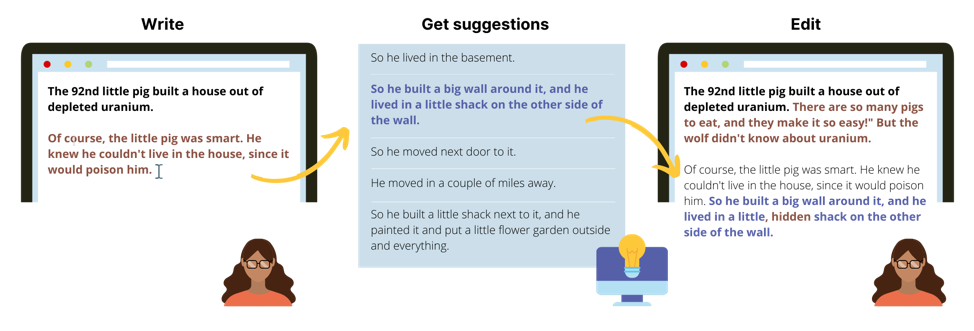

The researchers engaged more than 60 people to write more than 1,440 stories and essays, each one assisted by CoAuthor. As the writer begins to type, he or she can press the “tab” key and the system presents five suggestions generated by GPT-3. The writer then can accept the suggestions based on his or her own sensibilities, modify them, or disregard them altogether.

As a dataset, CoAuthor keeps track of all interactions between writers and the model, including text insertion and deletion as well as cursor movement and suggestion selection. With this rich interaction data, researchers can analyze when a writer requests suggestions, how often the writer accepts suggestions, which suggestions get accepted, how they were edited, and how they influenced the subsequent writing.

As an analytical tool, CoAuthor can determine how “helpful” the accepted suggestions are to the human writer or, conversely, it can interpret rejected suggestions as a proxy for the writer’s taste to improve its suggestions for future language models.

After each writing session, the writers took a survey about their relative satisfaction with the collaboration and their own sense of productivity and ownership in the resulting work. Often, the writers said, the words and ideas proposed by CoAuthor were welcomed as both new and useful. At other times, the suggestions were disregarded because they took the writer in a different direction than intended. And sometimes they felt that the suggestions were too repetitive or vague and, as a result, didn’t add much value to their stories and essays.

Lee found that the degree of collaboration between GPT-3 and the writers seem to have little effect on their satisfaction in the writing process, but it could have a negative influence on their sense of ownership of the resulting text. On the other hand, many participants enjoyed taking new ideas from the model suggestions and used them in the subsequent writing.

“I especially found the names helpful,” wrote one of CoAuthor’s participants in a post survey. “I was actually trying to think of a stereotypical rich jock name and the AI provided me with [one]. Perfect!”

CoAuthor’s creators also found that the use of large language models increased writer productivity as measured in the number of words produced and the amount of time spent writing. On a purely practical but intriguing level, the sentences written by both a human writer and a model seem to have fewer spelling and grammatical errors, but higher vocabulary diversity than the human-produced writing, too.

“The best collaborations between a human and a model seem to be when the writer uses his or her own creative sensibilities to evaluate the suggestions and decides what to keep and what to leave out,” Lee explains. “Overall, they felt CoAuthor brings new ideas to the table and improves their productivity and their artistry.”

Cause for Concern?

In the near term, there are some technical hurdles that will have to be surmounted. It is well documented that large language models are prone to generating biased and toxic language. Currently, CoAuthor filters out potentially problematic suggestions based on a list of banned words. However, there is a necessary tension between employing more extensive filtering and the appropriate evaluation of language model capabilities.

In the end, maybe AI capable of producing masterpieces is not one that doles out polished prose or provocative poetry, but rather the sort to offer suggestions that can complement a human’s writing. This is already starting to happen, as CoAuthor ably proves. However, wherever the wordsmith uses technology for aid, artificial intelligence that writes well is still a long way away.

Stanford HAI's mission is to advance AI research, education, policy, and practice to improve the human condition. Learn more.