Training a Robot to Shape Letters from Play-Doh

Stanford’s RoboCraft learns to mold deformable objects from visual cues, a capability that could lead to more useful home assistants.

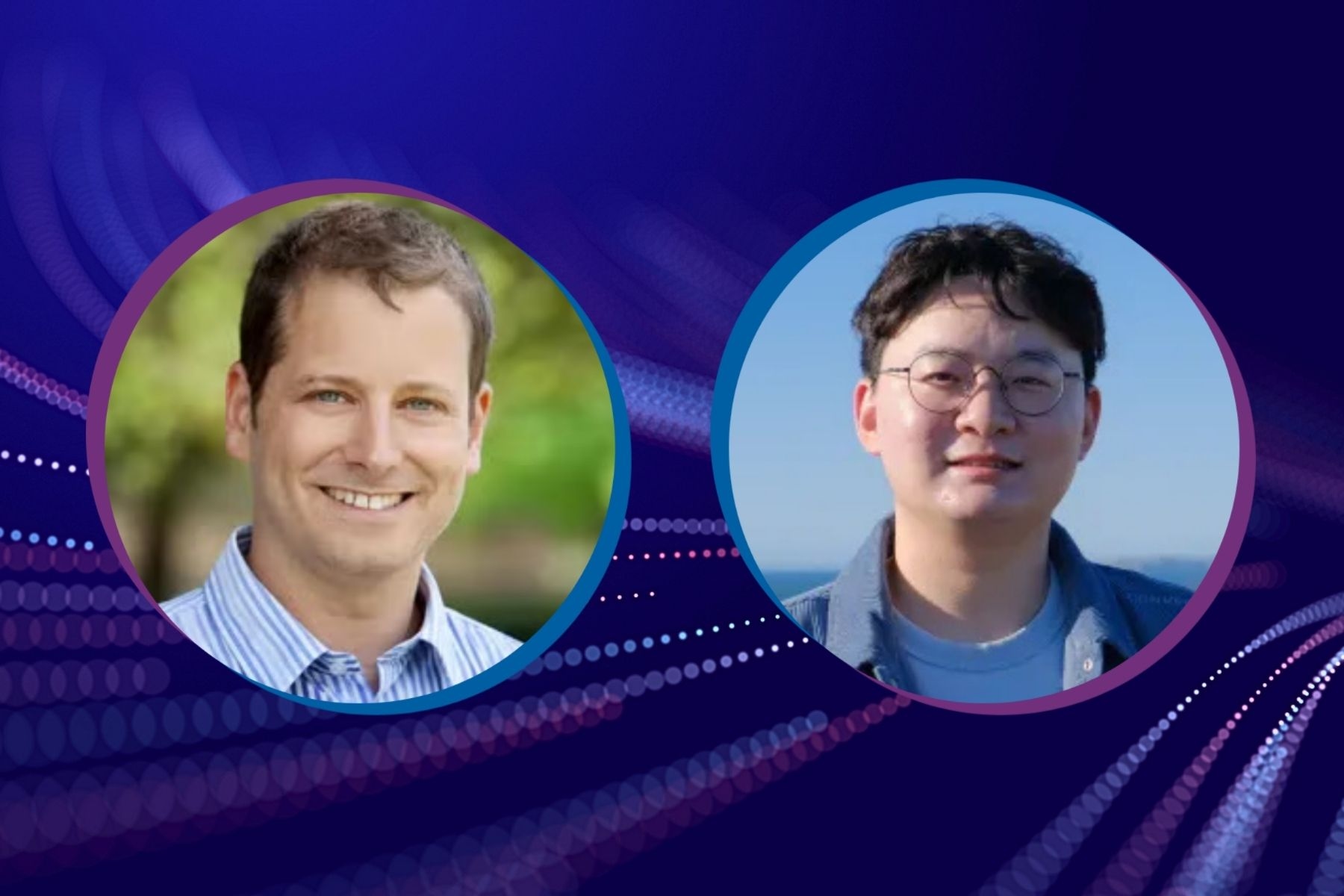

Robots can competently assemble, pack, and otherwise manipulate objects that are made from solid materials, but they are far less adept when it comes to handling materials that don’t hold their shape, like clothing, mashed potatoes, sushi, or ground beef, says Jiajun Wu, assistant professor of computer science at Stanford University and an affiliate of Stanford HAI.

Humans, on the other hand, can capably manipulate deformable materials even from a young age. “We have to deal with deformable objects all the time,” Wu says. “If we want robots to have human-like capabilities, we need them to be able to predict how things like plasticine or Play-Doh will deform when we apply specific actions to them.”

Wu’s team at Stanford recently trained RoboCraft, a robot with two parallel finger-like grippers, to mold Play-Doh into the letters of the alphabet. “You could regard this initial work as a first application for baking alphabet-shaped cookies,” says Huazhe Xu, a postdoc in Wu’s lab and one of the lead authors of the team’s paper, which was published recently at the top robotics conference, Robotics: Science and Systems.

Read the paper, "RoboCraft: Learning to See, Simulate, and Shape Elasto-Plastic Objects with Graph Networks"

Next, the team will give RoboCraft some new tools – a rolling pin, a stamp, and a mold – and will train it to shape dumplings, says Haochen Shi, a graduate student in Wu’s lab and co-lead author of the paper.

In the long run, the team hopes the project will lead to robots that can help with various household tasks. “Once robots can understand and interact well with deformable objects, they will be able to do other things that are really more practical and helpful to humans, such as fold laundry or make sushi or noodles,” Wu says.

In addition to the work’s potential practical applications, it also marks a technical advance: The robot learns to manipulate deformable objects directly from visual input gathered from four cameras. “If you want to develop a robot that can be useful in the household, it will have to, like humans, rely on visual capability,” Wu says. “RoboCraft is getting us a little closer to that possibility.”

Molding Play-Doh from Visual Input Alone

In the past, researchers training robots to manipulate deformable objects have relied on a physics simulator to model the forces being applied and to understand the dynamics of the object. In addition, they often made assumptions about the shape of the object and the material being deformed. By contrast, Wu’s team trained RoboCraft only from visual information. “That’s the innovation of this paper,” Wu says.

The team’s approach involved three steps: teaching the robot how to see the Play-Doh as it changes; teaching it how to predict the Play-Doh’s shape after it’s pinched; and training it to optimize for a particular target shape.

The team collected visual information from four cameras that surrounded the robot as it pinched and released a rectangular blob of Play-Doh. In their machine learning system, they represented the Play-Doh’s initial and resulting shapes as a particle cloud: Each time the grippers pinched and released the Play-Doh, the new shape was represented as a new cloud.

The system then learned a dynamics model that could predict the effect of each Play-Doh pinch on the particles’ movement. But because Play-Doh is a uniform, smooth material, the particle approach presented a problem: As the Play-Doh deformed, there was no way to track the movement of individual particles from one timestep to the next. To address this issue, the team used a graph neural network approach that blended two different statistical methods for pairing up points between the timesteps. Each method then minimized the total distance between the pairs of points. After allowing RoboCraft to pinch the Play-Doh 150 times (50 cycles of three pinches each) – about 10 minutes of real-world interactions – the dynamics model learned to predict how any given pinch would alter the particle cloud’s shape.

Finally, to teach the robot to produce specific target shapes, the team had to optimize the sequence of actions the robot would take to reach a target shape – a letter of the alphabet. “There are so many different ways of pushing and interacting with the particles,” Wu says, “But we teach the system to take the actions that will produce the shape that’s closest to the target.”

From the Alphabet to Dumplings

After training RoboCraft using random pinches of the Play-Doh block, the team then tasked the robot with shaping the letters of the alphabet. The resulting Play-Doh shapes, though rough, do look like the letters they were intended to create. In fact, the robot’s letter shapes were more recognizable than those created by humans manipulating the same two parallel finger-like grippers.

A limitation of the work is that it has only been tested on Play-Doh using a robot with just two grippers, says Yunzhu Li, an MIT graduate student who collaborated on the project and will soon join Wu’s lab as a postdoc. The team will have to overcome some technical challenges as they add more tools and materials, he says, but they are excited for the future. “We look forward to innovating to extend our method to tasks like spreading butter on a piece of bread or making salads.”

In fact, Wu’s team is currently developing the next iteration of RoboCraft that will make dumplings from dough and a prepared filling. Although the team’s dumpling experiments are currently being run with Play-Doh, they hope to switch to real food ingredients soon. The project requires training the robot to use both multiple tools and multiple materials, Shi says. The robot will roll out a flour-based dough, add a meat or vegetable filling, and then mold and seal the dumpling into a desired shape. “We are expecting they will not only look like real dumplings,” Shi says, “but taste like them too.”

Stanford HAI's mission is to advance AI research, education, policy, and practice to improve the human condition. Learn more.