Using “Pile of Law,” a dataset of legal materials, Stanford researchers explore filtering private or toxic content from training data for foundation models.

Using “Pile of Law,” a dataset of legal materials, Stanford researchers explore filtering private or toxic content from training data for foundation models.

Toward Fairness in Health Care Training Data

This brief highlights the lack of geographic representation in medical-imaging AI training data and calls for nationwide, diversity-focused data-sharing initiatives.

This brief highlights the lack of geographic representation in medical-imaging AI training data and calls for nationwide, diversity-focused data-sharing initiatives.

Computer scientists must identify sources of bias, de-bias training data and develop artificial-intelligence algorithms that are robust to skews in the data, argue James Zou and Londa Schiebinger in Nature.

Computer scientists must identify sources of bias, de-bias training data and develop artificial-intelligence algorithms that are robust to skews in the data, argue James Zou and Londa Schiebinger in Nature.

In risk modeling, AI researchers take a more-is-better approach to training data, but a new study argues that a less-is-more approach may be preferable.

In risk modeling, AI researchers take a more-is-better approach to training data, but a new study argues that a less-is-more approach may be preferable.

A new study reveals models aren’t reporting enough, leaving users blind to potential model errors such as flawed training data and calibration drift.

A new study reveals models aren’t reporting enough, leaving users blind to potential model errors such as flawed training data and calibration drift.

The lessons learned from the fine-tuning and evaluation of Vietnamese LLMs could help broaden access to models beyond English speakers.

The lessons learned from the fine-tuning and evaluation of Vietnamese LLMs could help broaden access to models beyond English speakers.

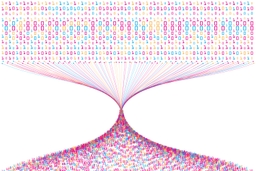

Whose Opinions Do Language Models Reflect?

This brief introduces a quantitative framework that allows policymakers to evaluate the behavior of language models to assess what kinds of opinions they reflect.

This brief introduces a quantitative framework that allows policymakers to evaluate the behavior of language models to assess what kinds of opinions they reflect.