Wearable Device Allows Humans To Control Robots with Brain Waves

Wearing a non-invasive, electronic cap that reads the wearer’s EEGs, humans can now command robots to perform a range of everyday tasks.

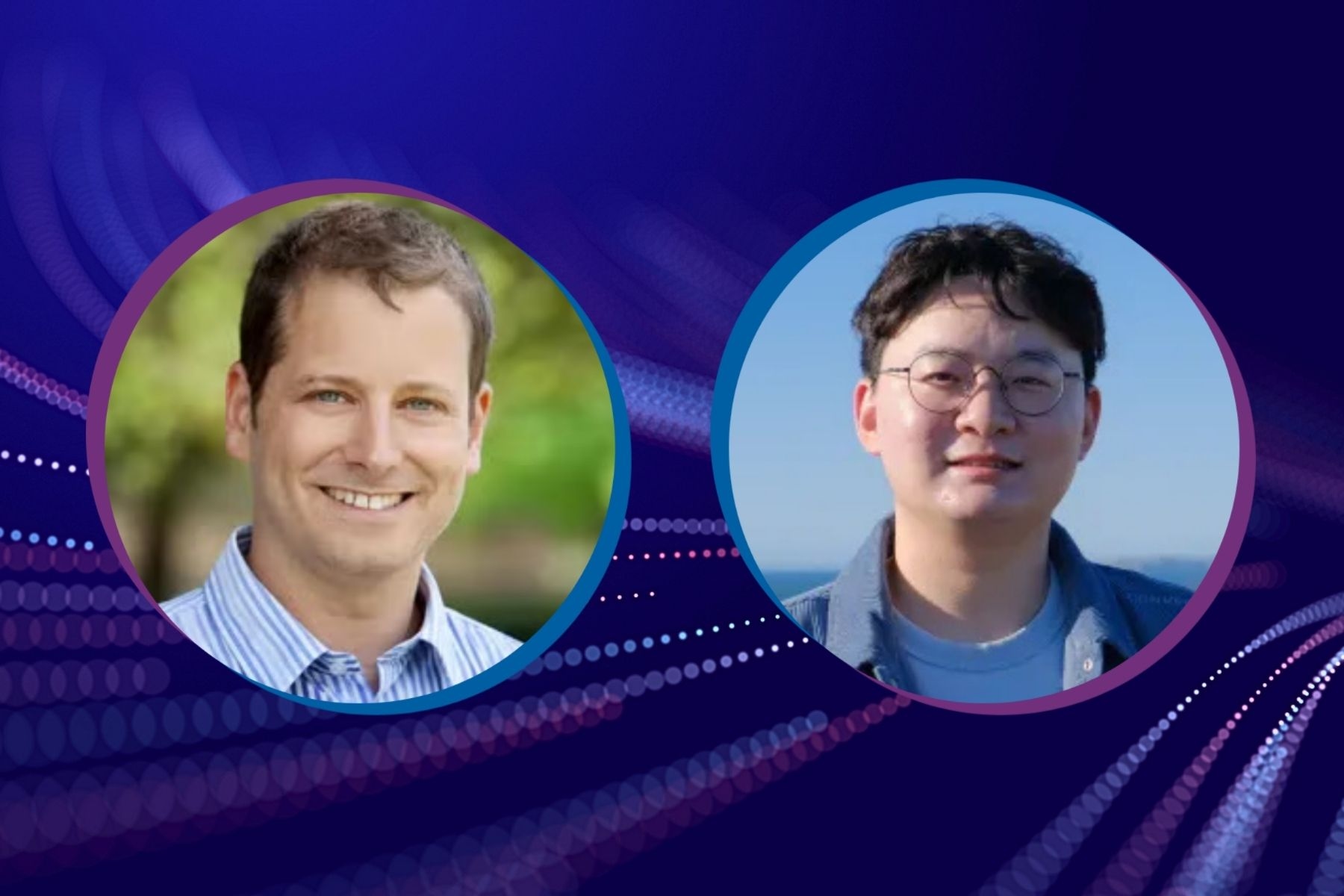

Researchers at Stanford University have developed a wearable, electronic cap able to read brain waves (EEGs) and allow people to direct robots to move objects, clean countertops, play tic-tac-toe, pet a robot dog, and even cook a simple meal using only their brain signals. The researchers call it NOIR, Neural Signal Operated Intelligent Robots, no cynical crime drama involved.

“The wearer first focuses on an object — a bowl or a mixer, for instance — and what he wants to happen … pour bowl into mixer … and the robot does it,” says Ruohan Zhang, a postdoctoral researcher in Stanford’s Vision and Learning Lab and co-first author of the paper introducing NOIR, which was presented recently at the Conference on Robot Learning.

Zhang has a background in both neuroscience and computer science and says he has been thinking about NOIR for nearly a decade. While it sounds simple, the science behind NOIR is anything but. EEGs (electroencephalographs), or a record of the electrical activity in the brain, are generally weak, and using them to determine what objects the wearer is paying attention to and how the wearer wants to interact with the object is extremely hard, Zhang explains.

Read the full paper, NOIR: Neural Signal Operated Intelligent Robots for Everyday Activities

“You cannot do it by just asking them to think about an object. Actually, there are pretty mature techniques from neuroscience research, known as steady-state visually evoked potential (SSVEP) and motor imagery,” he says.

He offers an example: A person focuses on an object, say a plate, on a computer screen. How does NOIR know which object the person is focusing on? NOIR attaches a “flickering” mask to all of the objects, but these masks flicker at different frequencies. This flickering signal elicits strong responses in the visual cortex of the brain. NOIR can “see” (identify) that object in the EEG flicker patterns emanating from the wearer’s visual cortex.

By reading these patterns generated from visual neurons firing as the person looks at different objects, researchers can identify which object the wearer is thinking of. Once they figure out which object the user is paying attention to, then they imagine what the user wants to do — pick up the white bowl, move it to the mixer, pour the bowl in the mixer. These physical actions are also identifiable in the brain waves.

“It's pretty amazing neuroscience. It's beautiful, actually,” Zhang says.

The electronic cap sits atop the wearer’s head, but it is not invasive. There are no probes or electrodes into the brain itself. Zhang goes further to explain that using robotic learning algorithms NOIR, with a little training, can adapt to new users and predict their wishes.

Researchers in the robotic field have so far developed systems to help humans communicate their intentions to robots in many ways — button presses, eye-tracking, facial expressions, and even written and spoken language. EEGs and other types of brain signals, Zhang says, are the next natural step in that progression.

“We think NOIR has the potential to enhance the ways humans can interact with robots using direct, neural communication that could be particularly helpful for people with paralysis or motor dysfunction, or for the elderly with limited physical abilities,” Zhang says of the promise ahead.

Before that day can arrive, however, much work lies ahead. NOIR is at this point a proof-of-concept example that AI can successfully decode complex brain wave activity and turn it into robotic action. At this point the actions that NOIR can perform are limited to 20 or so relatively simple tasks, like slicing a banana, playing checkers, or untying a ribbon, but Zhang and others on the team at Stanford Vision and Learning Lab hope to expand and hone those tasks in subsequent research.

“While not perfect, NOIR is much more capable compared with the previous generations of this technology and points to a day where thought-controlled robots might be in every home,” Zhang says.

Stanford HAI’s mission is to advance AI research, education, policy and practice to improve the human condition. Learn more.