Brains Could Help Solve a Fundamental Problem in Computer Engineering

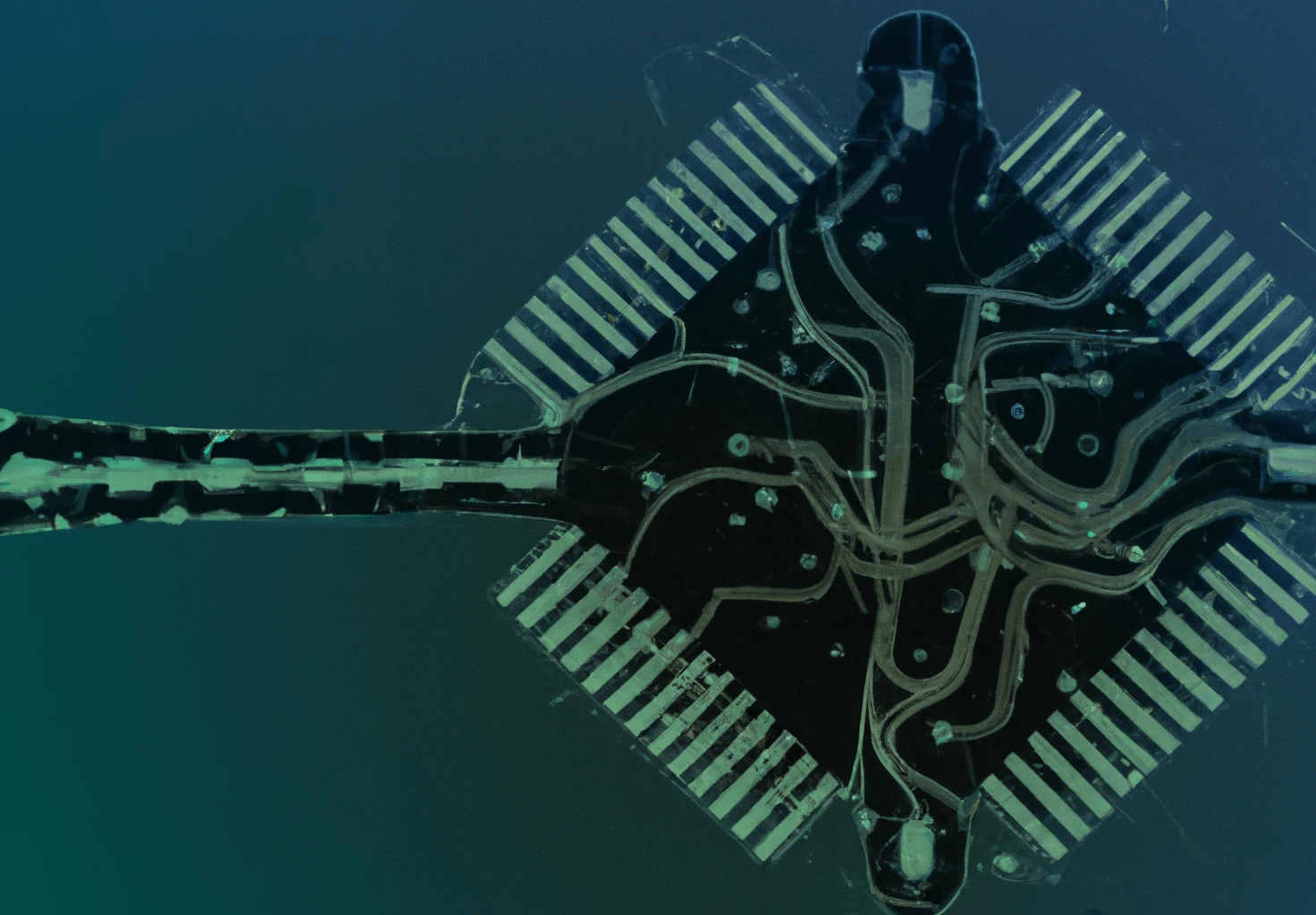

A Stanford professor looks toward dendrites for a completely novel way of thinking about computer chips.

The world’s first general-purpose electronic computer took up an entire room at the University of Pennsylvania and gobbled up so much electricity that its operation was rumored to dim lights across the city of Philadelphia. Called the ENIAC, it looked more like an appliance store than a modern-day laptop, with numerous, hulking components lined up against the room’s walls. And the differences weren’t merely skin-deep—unlike modern computers, which operate in binary, the ENIAC used base 10.

This seemingly innocent choice had some unexpected consequences. The ENIAC ran using electrified glass cylinders called vacuum tubes, and encoding the digits from zero to nine—not to mention all of the potential ways they could be added and multiplied and divided—took many, many vacuum tubes. The tubes often failed, and the six million dollar computer was frequently unusable. So when it came time to design ENIAC’s successor, EDVAC, the engineers decided to use binary instead. With fewer vacuum tubes, the computer was far more reliable.

As technology advanced, vacuum tubes were eventually replaced with the transistors that computers use today, and the original motivation for using binary became irrelevant. These days, computer engineers must contend with a different problem: heat. As Stanford bioengineering professor Kwabena Boahen detailed in a Nature perspective, published Nov. 30, 2022, the problem now is the wires that allow transistors to communicate with one another. The longer the wires are, the more energy they use; the wires can be shortened by stacking transistors on one another in three dimensions, but then the chips don’t dissipate enough heat.

In recent years, these technological limitations have become far more pressing. Deep neural networks have radically expanded the limits of artificial intelligence—but they have also created a monstrous demand for computational resources, and these resources present an enormous financial and environmental burden. Training GPT-3, a text predictor so accurate that it easily tricks people into thinking its words were written by a human, costs $4.6 million and emits a sobering volume of carbon dioxide—as much as 1,300 cars, according to Boahen.

With the free time afforded by the pandemic, Boahen, who is faculty affiliate at the Wu Tsai Neurosciences Institute at Stanford and the Stanford Institute for Human-Centered AI (HAI), applied himself single mindedly to this problem. “Every 10 years, I realize some blind spot that I have or some dogma that I’ve accepted,” he says. “I call it ‘raising my consciousness.’”

This time around, raising his consciousness meant looking toward dendrites, the spindly protrusions that neurons use to detect signals, for a completely novel way of thinking about computer chips. And, as he writes in Nature, he thinks he’s figured out how to make chips so efficient that the enormous GPT-3 language prediction neural network could one day be run on a cell phone. Just as Feynman posited the “quantum supremacy” of quantum computers over traditional computers, Boahen wants to work toward a “neural supremacy.”

Kwabena Boahen is a professor of bioengineering and affiliate of the Wu Tsai Neurosciences Institute and Stanford Center for Human-Centered Artificial Intelligence (HAI).

This push toward neural supremacy has been a long time coming. Boahen designed his first brain-inspired computer chip back in the 1980s, and since then, he has been a leader in the field of neuromorphic engineering, which seeks to design computers based on our knowledge about how the brain looks and functions. This approach makes a great deal of sense when it comes to energy usage. The human brain uses significantly less power than a laptop, and yet it easily undertakes computational tasks—language prediction, for example—that no standard laptop could handle. And as brains get bigger, their energetic demands increase relatively slowly, whereas bigger clusters of computer chips need vastly more energy to work.

Making chips more energy efficient means deploying less energy to send information from chip to chip. Shortening wires can help, to the extent that it’s physically feasible, but reducing how heavily the wires are used can make a huge difference as well. One way to use fewer signals is to invest each one with more meaning—to move from a binary system, where the only possible signals are zero and one, to a much higher base.

And that’s where the brain comes in. To figure out how to implement these sparser, richer signals in a computer chip, Boahen looked at the way that dendrites process information.

A dendrite, researchers have found, responds more strongly when it receives signals consecutively from its tip to its stem than when it receives these signals consecutively from its stem to its tip. Building off of these empirical results, Boahen devised a computational model of a dendrite that didn’t just discriminate inward from outward sequences, but in fact would respond only if it received one specific sequence along a short stretch. So if that simulated stretch of dendrite responds to its inputs, the response encodes very specific information—it tells you exactly which neurons have fired, and in what sequence they fired.

This characteristic of dendrites, Boahen says, can be used to encode numbers in much higher bases than base two. If each neuron is assigned a different digit, and the dendrite only response to a specific sequence of neurons, then that dendrite effectively recognizes a particular number in a base system determined by the number of neurons, just like a sequence of button presses on a calculator with 10 numerical buttons indicates a specific base 10 number.

The challenge now is translating this biological mechanism into silicon. Ushering in the age of “dendrocentric learning” is no mean feat, but now that Boahen’s consciousness has been raised, he’s all in. With support from the Wu Tsai Neurosciences Institute, Stanford HAI, and the National Science Foundation, he’s bringing together neuroscientists, engineers, and computer scientists at Stanford and beyond to make it happen. (Learn more about these groundbreaking projects.)

Forty years on from Feynman’s first explorations of quantum computing, scientists still haven’t built practically useful quantum computers, and Boahen acknowledges that he might be looking at a similar timeline when it comes to dendrocentric computing. But at this point, there’s no alternative for him. “Once you see it, you can’t unsee it,” he says. “I’m not the same guy that I was before.”

The work published in Boahen’s new Nature article was supported by the Stanford Institute for Human-Centered Artificial Intelligence (HAI), US Office of Naval Research, US National Science Foundation, Stanford Medical Center Development, C. Reynolds and GrAI Matter Labs.

This story was first published by the Wu Tsai Neurosciences Institute.